By Mike Phillips

When a prospective law student types “best law schools for public interest careers” into Google today, they may never scroll to a single website. An AI-generated summary appears at the top of the page — synthesized from dozens of sources, presented as a confident answer, attributed to no one in particular. The student reads it, maybe clicks one link, and moves on.

AI search optimizes for confidence, not completeness.

This is the new reality of how people research major life decisions. And for students exploring graduate and professional programs — law school, medical school, MBA programs, graduate education — the shift has consequences that most admissions content hasn’t caught up with yet.

The Old Search Model Is Breaking Down

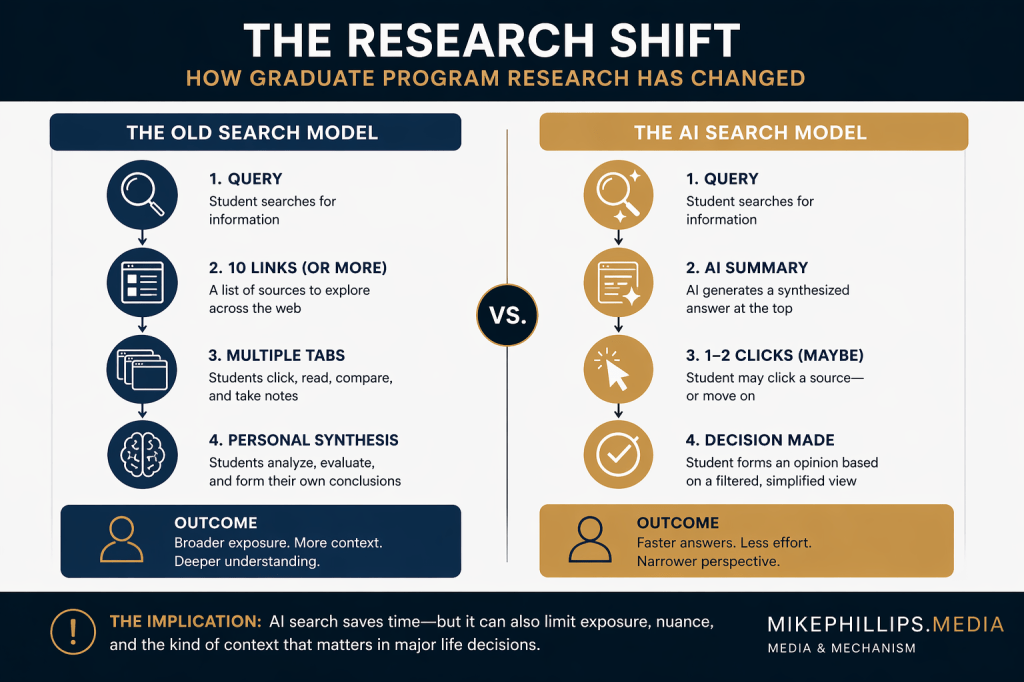

For the past two decades, search worked in a way that favored exploration. You typed a question, got a list of links, clicked around, compared sources, developed your own synthesis. It was time-consuming, but it meant students encountered a range of perspectives and institutions before forming a view.

AI-powered search — Google’s AI Overviews, ChatGPT’s browse mode, Perplexity, and similar tools — compresses that process dramatically. The model reads the web on your behalf and hands you a summary. For straightforward factual questions, this is genuinely useful. For complex decisions like graduate program selection, it introduces a new set of risks that students, institutions, and the people who help them navigate admissions need to understand.

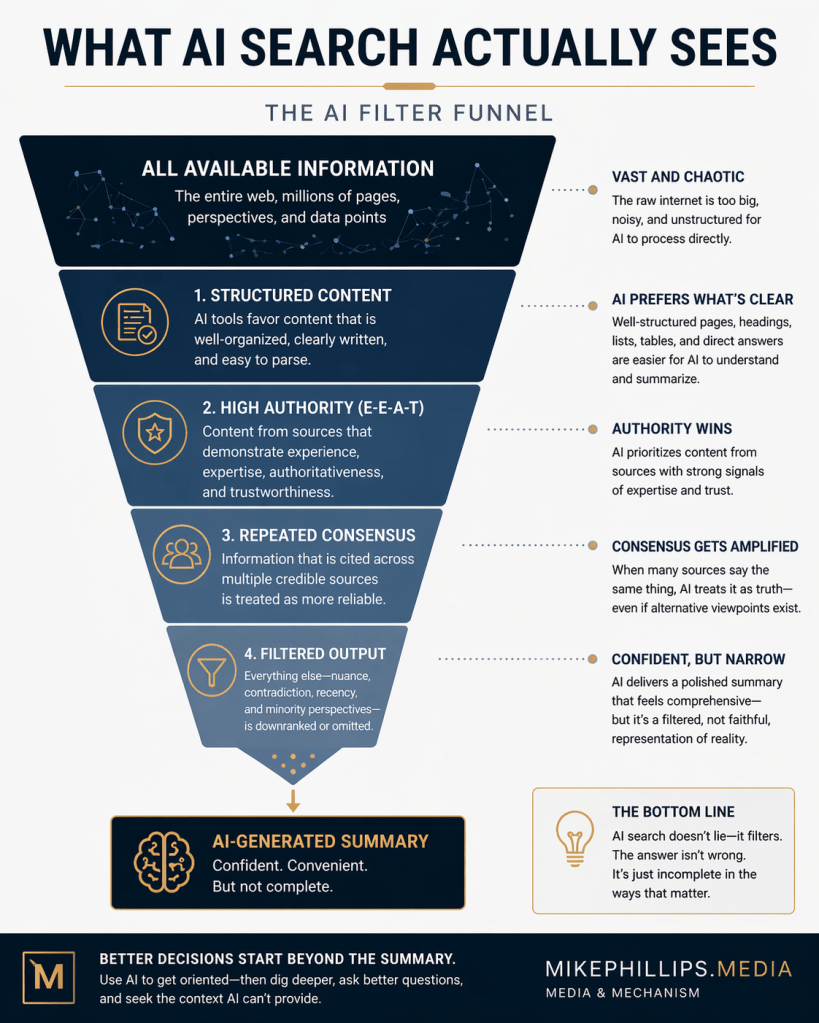

The core problem is this: AI search optimizes for confidence, not completeness. It surfaces information that is well-structured, frequently cited, and presented with authority. What it often misses is nuance, recency, and the kind of contextual judgment that actually matters when you’re choosing where to spend three years and six figures of your life.

What AI Search Actually Rewards

Understanding how AI search works helps explain what students are — and aren’t — seeing when they research programs.

Large language models and AI search tools learn to identify authoritative content through signals that aren’t entirely different from traditional SEO, but with some meaningful distinctions. Content that gets cited in AI overviews tends to be:

Structured clearly. AI tools parse content that is organized with logical headings, defined terms, and direct answers to specific questions. A 4,000-word essay that buries its main point in paragraph twelve will lose to a well-organized guide that answers the question in the first three sentences.

Demonstrably expert. Google’s E-E-A-T framework — Experience, Expertise, Authoritativeness, Trustworthiness — was developed before AI Overviews existed, but it maps almost perfectly onto what AI tools privilege. Content written or reviewed by people with genuine credentials on a topic surfaces more reliably than content that just sounds authoritative.

Consistent across sources. When multiple credible sources say the same thing, AI tools treat it as more reliable. This creates an echo chamber effect: the consensus view gets amplified, while minority perspectives or emerging research gets filtered out.

For a student trying to understand the difference between MD and DO programs, or evaluate whether a part-time MBA makes sense for their career stage, the information that surfaces in AI search will tend to reflect mainstream consensus. That’s fine for some questions. For others — especially questions where the right answer depends heavily on individual circumstances — it can be actively misleading.

The Information That Gets Lost

The gap between what AI search delivers and what students actually need is most visible in a few specific areas.

Program fit is not a searchable question. Whether a particular law school’s culture, clinical offerings, or loan repayment assistance program matches a specific student’s goals requires a kind of contextual matching that AI summaries cannot perform. Students who rely primarily on AI-generated research tend to receive well-packaged general information — average LSAT ranges, employment statistics, rankings methodology — without the framework to evaluate what those data points mean for them specifically.

Admissions strategy is evolving faster than the indexed web. AI models are trained on data with a cutoff date. Admissions trends, yield management practices, and scholarship negotiation dynamics shift year to year. A student relying on AI-summarized research may be working from a mental model of the admissions process that is one or two cycles out of date — not because the information is wrong, but because the most current intelligence lives in practitioner communities, recent application forums, and the working knowledge of people inside the process.

Institutional red flags rarely surface in SEO-optimized content. The information schools publish about themselves — and that third-party content farms produce about schools — is overwhelmingly positive. AI search draws heavily from this corpus. Critical reporting, student outcome data that doesn’t appear in official statistics, and community-level intelligence about program weaknesses are systematically underrepresented in what AI tools return.

What This Means for How Students Should Research

None of this is an argument against using AI search tools. Used well, they are genuinely useful for getting oriented quickly, generating a list of questions to investigate further, and understanding the general landscape of a field. The problem is treating them as a research endpoint rather than a starting point.

A more useful framework treats AI search as the first layer of a multi-layer process:

Use AI tools to map the terrain, not to make the decision. AI overviews are well-suited for understanding what the major considerations are, what terminology means, and what questions you should be asking. They are poorly suited for answering whether you specifically should attend a particular program.

Seek out sources that AI search systematically underweights. Recent forum discussions, student-run publications, investigative journalism on graduate education, and direct conversations with current students and alumni carry information that doesn’t make it into AI summaries. These sources require more effort to find and evaluate, but they’re often where the most useful signal lives.

AI should map the terrain — not make the decision.

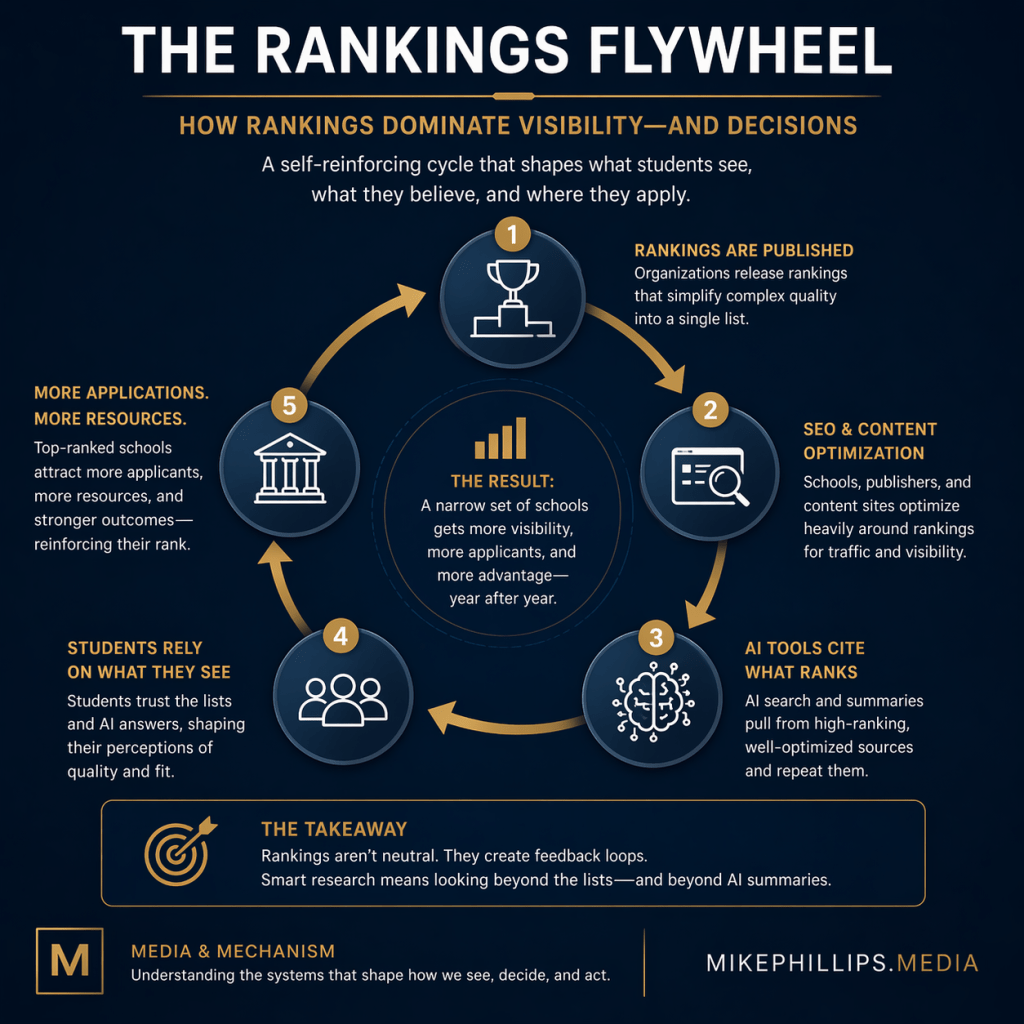

Treat rankings with more skepticism, not less. Rankings content is among the most search-optimized material on the internet, and it surfaces constantly in AI-generated research. Running “best MBA programs for career changers” through Perplexity in April 2026 returns a confident, well-structured list: Stanford, Harvard, Wharton, Booth, Kellogg — the usual suspects, sourced primarily from a Fortune rankings piece and a LinkedIn article. The answer is not wrong. But it tells you nothing about yield management, scholarship negotiation, which programs are actively recruiting career changers in your specific function, or how admissions committees at each school actually read a non-traditional profile. The methodology debates, the ways institutions game ranking metrics, and the limited correlation between rank and individual outcome are all absent. A student whose graduate school research is primarily AI-mediated is more likely to over-index on rankings than one who reads more broadly — not because the AI is lying, but because rankings content dominates the indexed web and AI tools reflect that dominance faithfully.

Verify recency explicitly. When AI tools provide statistics or characterizations of programs, make a habit of checking the date on the underlying source. Admissions data from three years ago may no longer reflect current reality. Employment outcomes reported in AI summaries may predate significant changes in a field.

What Institutions and Advisors Need to Understand

The shift to AI-mediated research has implications beyond individual students. Institutions, admissions consultants, and anyone producing content in the graduate education space are operating in an environment where the rules for being found and understood have changed.

Content that was well-optimized for traditional search — keyword-dense, link-rich, high page-count — does not automatically perform well in AI search environments. The new optimization target is structured, genuinely authoritative content that answers specific questions completely and accurately. Thin content that existed primarily to rank for head terms is getting filtered out of AI-generated summaries at a higher rate than substantive content.

AI should map the terrain — not make the decision.

For admissions coaching services specifically, this creates both a challenge and an opportunity. The challenge is that AI tools are now providing a version of the general admissions guidance that was previously a value-add service — program overviews, timeline frameworks, basic application strategy. The opportunity is that the genuinely high-value work — the contextual judgment, the current-cycle intelligence, the individualized strategy — is precisely what AI search cannot replicate. Positioning around that distinction is increasingly important.

The Deeper Issue

There’s a version of this conversation that frames AI search as a problem to be solved — something students need to work around to get to the real information. That framing misses something important.

The rise of AI-mediated research is accelerating a shift that was already underway: the devaluation of generic information and the increased premium on genuine expertise, current knowledge, and individualized guidance. For students willing to go beyond the AI summary, the information environment is in many ways richer than it’s ever been — primary sources are more accessible, practitioner communities are more connected, and the tools for synthesizing large amounts of information are genuinely powerful.

The advantage now belongs to the student who knows what AI leaves out.

The students who will navigate this environment most successfully are those who understand what AI search is good at, recognize what it misses, and build research habits that account for both. That’s not a technical skill. It’s a critical thinking skill that happens to be newly important in 2026.

Leave a comment